Warning: if you got here searching for some information about the 35th president of the United States of America, you are in the wrong place! This post (and related ones) have nothing to do with politics or the above president, this is only Java!

Another warning: I have some strong opinions. You can disagree with me

as much as you want, but please keep in mind I'm open only

to constructive discussions.

JFK stands for Java Functional Kernel, and it is a framework that brings function power to the Java World. Java does not use the term "function", preferring the term "method", but the two are, at least for what concerns JFK, the same. A function/method is a code block that you can call passing arguments on the stack and getting back a result.

But while in Java first-class elements are classes, and therefore you cannot abstract a method outside a class, JFK brings the power of function pointers to Java, allowing you to use functions (or methods, if you prefer such term) as first-class entities too.

"Wait a minute, Java does not allow function pointers!"

That is true, standard Java does not allow them, but JFK does.

"I don't need function pointers in Java, Java is a modern OOP language where function pointers are not useful and, besides, I think they are evil!"

If this is what you are thinking, well, I will not call you idiot because I'm polite, but you probably should not read this; you should go back to your seat and continue typing on your keyboard some standard Java program.

"An OOP language cannot admit function pointers!"

Ok, now I'm really thinking you are an idiot, so please move away. Being OOP does not mean that function pointers are not allowed, but rather that they must be objects too.

Now if you think this can bring a new way of developing Java applications and can increase your expressiveness, keep reading.

So what do you get with JFK?

JFK enables you to exploit three main features:

- function pointers

- closures

- delegates (in a way similar to C#)

At the time of writing JFK is at version 0.5, that is enough stable to run all the tests and the examples reported here. The project is not yet available as Open Source, but it will be very soon, probably under the GNU GPLv3 license. In the following I will detail everyone of the above features.

Please take into account that

JFK is not reflection! Java already has something similar to a function pointer, that is the

Method object of the reflection package, but JFK does not use it. In JFK everything is done directly, without reflection in order to speed up execution.

Function pointers

The first question is: why do I need function pointers? Do I really need an abstraction over methods/functions? Well, I think YES!

As well as

OSGi provides a mechanism to exports only some packages at run-time, function pointers provide the ability to export functions without having to export objects. To better understand, imagine the following situation: you've got an object (Service Object) that has two service methods, called M1 and M2. Now you've got two processes (whatever a process means to you) that must use only one of the methods, so imagine that the first process must use M1 and the second one M2. Being M1 and M2 defined in the same class, the only solution is to share the service object among the two processes, as shown in the following picture.

This solution is no modular at all, since both processes must keep a reference to a shared object. A better solution, without having to write a wrapper object for every method, is to create a set of interfaces, each one tied to a method. In this way the service object can implement every interface it must expose, and the processes can hold a reference to the interface (and therefore to the service object). The situation is shown in the following figure.

This approach has several drawbacks:

- a new interface is needed to modularize every exposed method;

- it is still possible to inspect, thru reflection, the reference and understand which object it is "hiding".

The (1) requires developers to write a lot of code, and this is what happens with normal event handlers, such as ActionListener: you have to declare a single-method interface for every method you want to expose. The (2) is a security hole: with reflection the reference holder can inspect the object and understand it has the method M2 and even call it.

So, while very OOP, this approach has limitations that can be overtaken with the adoption of function pointers.

With function pointers it is possible to expose only pointers to a method M1 or to M2 without having to expose the object (or its interfaces) to the consumer processes, as shown in the following picture.

This is a very modular way of doing things: you don't have to worry about the service object, introspection against it, or even where the object is stored/held: you are passing away only the pointer to one of the functions and the receiver process will be able to exploit only the method/function behind such pointer.

"Ok, I can get this for free with java.lang.reflect.Method objects"

Again, this is not reflection! Reflection is slow. Reflection is limited. This is a direct method call thru a pointer object! And no, this is not a fancy use of proxies/interceptors! Note that with reflection you are able to invoke only local methods, while with JFK you are free to call even remote functions without having to deal with RMI objects and stubs. At the moment this feature is not implemented yet, but it is possible. Please keep into account that, at the time of writing, JFK is still a proof of concept, so not all possible features have been implemented!

Keeping an eye on security, JFK does not allow a program to get a function pointer to any method available in a class/object, a method must be explicitly exported, that is when you define a class and its methods, you have to explicitly mark the methods you want to be able to be pointed. This allows you to define with a fine grain what services (as functions) each calss must export. Exporting a method is really simple, you have to mark it with the @Function annotation indicating the method name, that is an identifier that is used to refer to that specific method (it can be the same as the method name or something with a different meaning, like 'Service1'). Let's see an example:

public class DummyClass {

public final String resultString = "Hello JFK!";

public String aMethodThatReturnsAString(){

return resultString;

}

@Function( name = "double" )

public Double doubleValue( Double value ){

return new Double( value.doubleValue() * 2 );

}

@Function( name = "string" )

public String composeString( Integer value ){

return resultString + value.intValue();

}

@Function( name = "string2" )

public String composeStringInteger(String s, Integer value ){

return s + value.toString();

}

}

The above class exports three instance methods, with different identifiers. For instance the method

composeStringInteger is exposed with the identifier of 'string2'. This can be used from a program in the following way:

// get a pointer to another function

// the "double" function returns a computation of a double

// passed on the stack (dummy is a DummyClass instance)

IFunction function = builder.bindFunction(dummy, "double" );

dummy = null; // note that the dummy object is no more used!!!

Double d1 = new Double(10.5);

Double d2 = (Double) function.executeCall( new Object[]{ d1 } );

System.out.println("Computation of the double function returned " + d2);

// it prints

// Computation of the double function returned 21.0

As you can see, you can obtain a function pointer to an object method that is marked as 'double', and then executes the function with the IFunction.executeCall(..). That's so easy!

So to recap, you can get an IFunction object bound to a method identified by an exposing name, and you can execute the executeCall method on such IFunction in order to execute the function pointed. I stress it again: this is not reflection! Moreover, IFunction is an interface without an implementation, that means there is nothing static here, all the code is dynamically generated at run-time.

Being dynamic does not mean that there are not checks and constraints: before invoking the function, the system checks the arguments number, the argument type and so on and throws appropriate exceptions (e.g., BadArityException).

Now, inspecting the stack trace of the IFunction.executeCall(..) you will never see a Method.invoke(..) or stuff like that (do you remember that this is not reflection?).

Performances are really boosted with JFK when compared to reflection. For instance, the method call of the 'double' function requires around 12850 nanoseconds with JFK, while it requires 324133 nanoseconds using reflection (in particular 292914 ns to find the method and 31219 ns to invoke it). So a simple method execution goes 25 times faster, and even more: since IFunction objects are cached, once they are bound to a function, the execution of the pointer is almost immediate!

Closures

Closures are pieces of anonymous code that can be executed as first-class entities. To say in simple words, closures are like Java methods that can be defined on the fly and that are not belonging to any special class.

Java does not support closures, but something similar can be obtained with inner anonymous classes. Having function pointers, closures come almost for free, so that in you code you can do something like the following:

IClosureBuilder closureBuilder = JFK.getClosureBuilder();

IFunction closure = closureBuilder.buildClosure("public String

concat(String s, Integer i){ return s + i.intValue(); }");

// now use the closure, please note that there

// is no object/class created here!

String closureResult = (String) closure.executeCall( new Object[]{

"Hello JFK!", new Integer(1234) } );

System.out.println("Closure result: " + closureResult);

// it prints

// Closure result: Hello JFK!1234

Closures are defined as

IClosure objects, that are a special case of

IFunction objects. While

IFunction objects point to an exisisting method,

IClosure objects point to a method that is still not existing and that is not exposed thru any class/object. Again, there is no reflection here, and there is no static implementation of

IClosure available. Execution times are on the same order of IFunction ones, but closures are not cached in any way, since they are thought to be one-shot execution unit. I haven't inspected if my approach is the same as of

Groovy closures, I suspect there is something similar here.

Delegates

Delegates are something introduced by C# to allow an easy way to simulate function pointers for event handling. JFK provides a declaritive way of defining delegates and their association that is somewhat similar to the

signal-slot mechanism of Qt. First of all a little of terminology:

- a delegate is the implementation of a behaviour (this is similar to a slot in the Qt terminology)

- a delegatable is an object and/or a method that can be bound to a delegate, so to a concret implementation (this is similar to a signal in the Qt terminology)

So for instance, with the well known example of the

ActionEvent it is possible to say that the method

actionPerformed(..) is a delegatable, while the implementation of the

actionPerformed(..) is the delegate.

The idea that leads JFK delegates has been the following:

- delegatable methods could be abstract (the implementation does not matter when the delegate is declared)

- adding and removing a delegate instance should be dynamic and should not burden the delegatable instance

Let's see each point with an event based example; consider the following event generator class:

public abstract class EventGenerator implements IDelegatable{

public void doEvent(){

for( int i = 0; i < 10; i++ )

this.notifyEvent( "Event " + i );

}

@Delegate( name="event", allowMultiple = true )

public abstract void notifyEvent(String event);

}

The above EventGenerator class is a skeleton for an event provider, such as a button, a text field, or something else. The idea is when the doEvent() method is executed an event is dispatched thru the notifyEvent(..) method. As you can see the (1) states that the notifyEvent(..) method can be abstract, as it is in this example. The idea is that, since the notifyEvent(..) should have the implementation done by someone else (the event consumer), its implementation does not matter here. Letting the delegatable method abstract means that you cannot instantiate the object EventGenerator without having bound it to a method implementation. If you need to be able to instantiate it, you can provide a body method (even empty) keeping in mind that it will be replaced by a connection with the delegate that must execute the method. The delegatable method must be annotated with the @Delegate annotation, where you can specify a name (that is similar in aim to the function name) and a flag that states if the delegatable can be connected to multiple delegates. And here comes the (2): note how the delegatable method is called once. Even when multiple delegates are connected to the delegatable the JFK kernel takes care of the execution of all the delegates. In standard Java you have to write:

public void doEvent(){

for( int i = 0; i < 10; i++ )

for( MyEventListener l : this.listeners )

l.notifyEvent( "Event " + i );

}

having

listeners and

MyEventListener respectively a list of listeners and the interface associated to the listener. Can you see the extra loop to notify all the listeners?

It means that the producer has to keep track of all the consumer, and this is wrong! It strictly couples the consumer to all the producers, and this is an awkward implementation. In fact, I think that the event mechanism as implemented by standard Java is not an uncoupling mechanism, and this is why I tend to prefer AspectJ/AOP event notifications (

as implemented in WhiteCat). Again, there is no reflection here, and the kernel is not keeping track of all the consumers, rather it manages a set of function pointers to consumers. It's that easy!

Now let's see how you can use the delegates; first you have to implement a behaviour for the delegate, assume we have the following two:

public class EventConsumer implements IDelegate{

@Connect( name="event" )

public void consumeEvent(String event){

System.out.println("\n\t********** Cosuming event "

+ event + "\n\n\n");

}

}

public class EventConsumer2 implements IDelegate {

@Connect( name="event" )

public void consumeEvent2( String e ){

System.out.println("\n\t**********>>>>>>>

Cosuming event " + e + "\n\n\n");

}

}

Both the event consumer have a method with the same signature of the delegatable one; the JFK kernel checks before binding methods that the signature are compatible. Both methods are annotated with the @Connect annotation that specifies the same name of the delegatable to which connect to. Now you can write a program that does the following:

IDelegateManager manager = JFK.getDelegateManager();

IDelegatable consumer = (IDelegatable) manager.createAndBind(

EventGenerator.class, new EventConsumer() );

// now the delegate will invoke the abstract method,

// that has been defined at run-time to match the

// consumer method in the EventConsumer object

((EventGenerator) consumer).doEvent();

// it prints

// ********** Cosuming event Event 0

// ....

// ********** Cosuming event Event 9

// now it is possible to add another consumer to the event generator,

// since it allows a multiple

// connection. To do this, we can add another delegate to the instance

manager.addDelegate(consumer, new EventConsumer2() );

// now the delegate will invoke the abstract method,

// that has been defined at run-time to match the

// consumer method in the EventConsumer object

((EventGenerator) consumer).doEvent();

// it prints

// ********** Cosuming event Event 0 <- from EventConsumer

// **********>>>>>>> Cosuming event Event 0 <- from EventConsumer2

// ....

// ********** Cosuming event Event 9 <- from EventConsumer

// **********>>>>>>> Cosuming event Event 9 <- from EventConsumer2

First of all you need a

delegate manager to which you ask to instantiate an

EventGenerator object (you cannot instantiate it directly in this example because it has abstract methods) binding it to an

EventConsumer instance. This produces a new instance of

EventGenerator that will call and execute

EventConsumer.consumeEvent(..) each time

EventGenerator.notifyEvent(..) is called. In the following, you can dynamically add (and remove) other delegates to the running instance of

EventGenerator so that all the associated delegates will be executed when the

EventGenerator.notifyEvent(..) method is called.

I stress it again: note that the event generator do not deal with all the possible event consumers, the JFK kernel does it! And again, there is no reflection involved here, everything happens as a direct method call!

The following picture illustrates how you can imagine the delegate works in JFK:

What else can I do with JFK?

Well, JFK is a functional kernel, so you can do whatever you do with functions. For instance

you can pass a function pointer to another method/function, enabling functional programming!

A final note on reflection

In this article I wrote several times that JFK does not uses reflection. This is not true at all. As you probably noted the current implementation is based on annotations, and this means that in order to get annotations and their values, JFK needs reflection. The thing that must be clear is that method execution thru function pointers does not use reflection at all!

(Some) Implementation Details

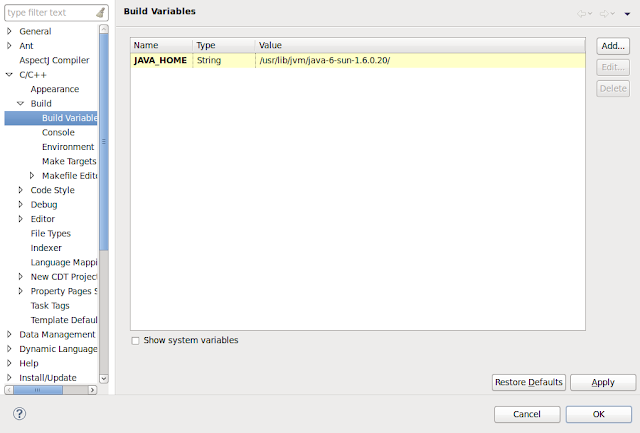

I'm not going to show all the internals, for now it suffice to know that this project is developed in standard Java (J2SE6) and, strangely, it is still not an

AspectJ project as I do for almost every project I run. All the configuration of the run-time system is done using

Spring, and there is a suite test (

Junit 4) that stress the system and its functionality.

Well, at the moment this is a private research project of mines, so I cannot show you all the details because they are still changing (but the API is stable). I've created

a page on the Open Source University Meetup where discussions can happen, beside my blog. If you need more info, or want to collaborate at the project, feel free to contact me.